Read this in 30 seconds: Autonomous pentesting tools use AI agents to discover attack surfaces, chain exploits, and generate reports without human intervention. They’re fast, scalable, and getting scary good. But they can’t replace human pentesters for business logic flaws, creative attack paths, and the weird stuff that breaks real applications. This guide covers what autonomous pentesting tools actually do, where they dominate, where they fall flat, and how XHack AI is building multi-agent systems that think, adapt, and hunt like actual red teamers.

The cybersecurity industry spent 2025 arguing about whether AI would replace pentesters.

In 2026, the argument is over. And the answer is: it’s complicated.

Autonomous pentesting tools are real. They work. Some of them are genuinely impressive. XBOW just raised $120 million at a billion-dollar valuation. Assail launched Ares with 100 coordinated AI agents per target. Open-source frameworks like PentAGI and BlacksmithAI are letting anyone spin up autonomous pentest agents from a Docker container.

But here’s the thing.

Every vendor is claiming “fully autonomous penetration testing” like it’s a solved problem. It’s not. The gap between what autonomous pentesting tools can do and what a skilled human pentester can do is still significant. It’s just shrinking fast.

I’ve tested these tools. I’ve built with them. I’ve watched them find vulnerabilities that would take a human team days to discover, and I’ve watched them completely miss business logic flaws that a junior pentester would catch in 20 minutes.

This article is the honest breakdown. What autonomous pentesting tools are actually good for, where they fail, and what the future looks like.

What Are Autonomous Pentesting Tools? Not Just Scanners With ChatGPT Bolted On

An autonomous pentesting tool is an AI system that can plan, execute, and report on a penetration test without step-by-step human guidance.

That’s the critical distinction. Traditional scanners (Nessus, Qualys, Burp Suite) follow predefined rules. They check for known CVEs, run signature-based tests, and produce a list of findings. They don’t think. They don’t adapt. They don’t chain exploits together.

Autonomous pentesting tools are fundamentally different. They have a feedback loop.

Here’s how that loop works:

1. Reconnaissance – The AI agent discovers the target’s attack surface. Subdomains, open ports, services, web technologies, authentication mechanisms.

2. Analysis – The agent reasons about what it found. “This is a Django application with a login panel. I should check for default credentials, then test for SQL injection on the authentication endpoint, then look for IDOR on authenticated endpoints.”

3. Exploitation – The agent attempts exploits, reads the response, and adapts. If the first SQL injection payload fails, it tries a different technique. If it gets a 403, it looks for bypass methods.

4. Chaining – This is the big one. The agent chains successful findings together. “I found an SSRF that lets me reach the internal network. Now I’ll use that to scan internal services. Found an unpatched Redis instance. I’ll use that to gain code execution.”

5. Reporting – The agent documents the full attack chain with evidence, severity ratings, and remediation guidance.

The difference between “automated scanning” and “autonomous pentesting” is step 2 through 4. Scanners do step 1 and skip to step 5. Autonomous pentesting tools reason through the entire attack like a human would.

The Autonomy Spectrum

Not all autonomous pentesting tools are created equal. Think of it as a spectrum:

| Level | Description | Examples |

|---|---|---|

| L1: Automated Scanning | Fixed rules, signature matching, no reasoning | Nessus, Qualys, OpenVAS |

| L2: AI-Assisted Scanning | Scanner + AI for prioritization/triage | Burp Suite with AI extensions |

| L3: Human-Guided AI | AI executes but human directs strategy | PentestGPT, Cobalt AI |

| L4: Semi-Autonomous | AI plans and executes with human oversight | BlacksmithAI, Zen-AI-Pentest |

| L5: Fully Autonomous | AI handles end-to-end with no human input | XBOW, Ares, XHack AI |

Most tools claiming “autonomous pentesting” in 2026 sit at L3 or L4. True L5 autonomy, where the AI handles the entire engagement from scoping through exploitation and reporting, is still emerging. But it’s getting closer every month.

What Autonomous Pentesting Tools Are Actually Good At

Let’s be real about where these tools dominate. Because when they work, they’re genuinely impressive.

Speed and Scale

A human pentester tests maybe 2-3 applications per week thoroughly. An autonomous pentesting tool can test 50+ in the same timeframe. For organizations with hundreds of applications, APIs, and services, this is transformative.

The math is simple: if you can only afford one annual pentest covering your top 10 applications, the other 490 never get tested. Autonomous pentesting tools make it possible to test everything, continuously, at machine speed.

Consistency and Coverage

Human pentesters have good days and bad days. They have specialties and blind spots. One tester might be excellent at web app testing but mediocre at Active Directory attacks.

Autonomous pentesting tools apply the same thoroughness to every target, every time. They don’t get tired at 4 PM on a Friday. They don’t skip tests because they’re running behind schedule.

Known Vulnerability Exploitation

For known CVEs and documented attack techniques, autonomous pentesting tools are often faster and more reliable than humans. They maintain updated databases of exploit techniques, test methodically against each service version, and validate exploitability with proof-of-concept evidence.

Continuous Testing

This is the killer feature. Traditional pentesting happens once or twice a year. Between tests, your attack surface changes daily. New code deploys, new services spin up, configurations drift, new CVEs get published.

Autonomous pentesting tools integrate into CI/CD pipelines and run continuously. Every deployment gets tested. Every change gets validated. The gap between “code deployed” and “security tested” shrinks from months to minutes.

Where Autonomous Pentesting Tools Completely Fall Apart

Now the honest part. Because nobody writing the marketing copy for these tools wants to talk about the failures.

Business Logic Flaws

This is the biggest gap. Business logic vulnerabilities require understanding what the application is supposed to do, then figuring out how to make it do something it shouldn’t.

“Can I apply a discount code twice?” “Can I change the quantity to -1 and get a refund?” “Can I access another user’s order by modifying the order ID?” “Can I skip the payment step by directly hitting the confirmation endpoint?”

These vulnerabilities don’t have signatures. They don’t follow patterns that AI can learn from generic training data. They require understanding the specific business context of each application. Autonomous pentesting tools are getting better at IDOR detection, but complex multi-step business logic flaws remain firmly in the human domain.

Social Engineering

No autonomous pentesting tool can call your receptionist and convince them to let a “vendor” into the server room. No AI agent can craft a targeted spearphishing email that references the CEO’s recent LinkedIn post. Social engineering remains 100% human territory.

Novel and Creative Attack Paths

Human pentesters find weird stuff. The kind of vulnerability that makes you say “how did you even think to try that?” A race condition between the payment processor and the inventory system. A path traversal through a file upload that only works with a specific Content-Type header. An authentication bypass that requires sending a request with a deliberately malformed JWT.

Autonomous pentesting tools test known attack patterns efficiently. They don’t invent new ones.

Physical Security and Complex Multi-Environment Attacks

Badge cloning, lock picking, dumpster diving, USB drops. Autonomous pentesting tools live in the digital world. Physical security testing requires actual humans in actual buildings.

Enterprise environments with hybrid cloud, on-prem Active Directory, legacy mainframes, and custom middleware create attack paths that span multiple technologies. Autonomous pentesting tools handle individual technology stacks well but struggle with creative cross-environment chaining.

| Capability | Autonomous Tools | Human Pentesters | Winner |

|---|---|---|---|

| Speed and scale | Hundreds of targets simultaneously | 2-3 per week | Autonomous |

| Known CVE exploitation | Comprehensive, methodical | Selective, sometimes incomplete | Autonomous |

| Business logic flaws | Basic IDOR detection only | Deep contextual understanding | Human |

| Social engineering | Can’t do it | Core skill | Human |

| Continuous testing | 24/7 automated | Annual/quarterly engagements | Autonomous |

| Novel attack paths | Pattern-based only | Creative lateral thinking | Human |

| Cost per test | $500-$5,000 | $15,000-$100,000+ | Autonomous |

Real-World Use Cases for Autonomous Pentesting Tools

Use Case 1: Continuous Web Application Testing

Scenario: SaaS company with 30+ microservices deploying 50 times per week.

Problem: Annual pentesting covers a snapshot. 49 out of 50 weekly deployments go untested.

Solution: Autonomous pentesting tools integrated into CI/CD pipeline test every deployment automatically. Human pentesters run quarterly deep-dive assessments on the most critical services.

Use Case 2: Android and Mobile App Testing

Scenario: Fintech company with Android and iOS apps handling payment data.

Problem: Mobile pentesting requires specialized skills and expensive manual testing.

Solution: Autonomous pentesting tools handle the automated surface: API endpoint testing, certificate pinning validation, data storage analysis, authentication flow testing. Human testers focus on business logic like transaction manipulation and privilege escalation between user roles.

Use Case 3: Enterprise Network Assessment

Scenario: Healthcare organization with 5,000 hosts across 15 VLANs.

Problem: Manual internal pentesting of 5,000 hosts would take months and cost $200k+.

Solution: Autonomous pentesting tools scan the full network, identify misconfigurations, test default credentials, and chain initial access to lateral movement. Human red teamers handle Active Directory attack paths and medical device exploitation.

Use Case 4: Third-Party Vendor Assessment

Scenario: Enterprise with 200+ SaaS vendors, each with API integrations.

Problem: You can’t pentest 200 vendors manually. Budget and time don’t exist.

Solution: Autonomous pentesting tools run surface-level assessments against all 200 vendor integrations. Flag the high-risk ones for manual deep-dive testing.

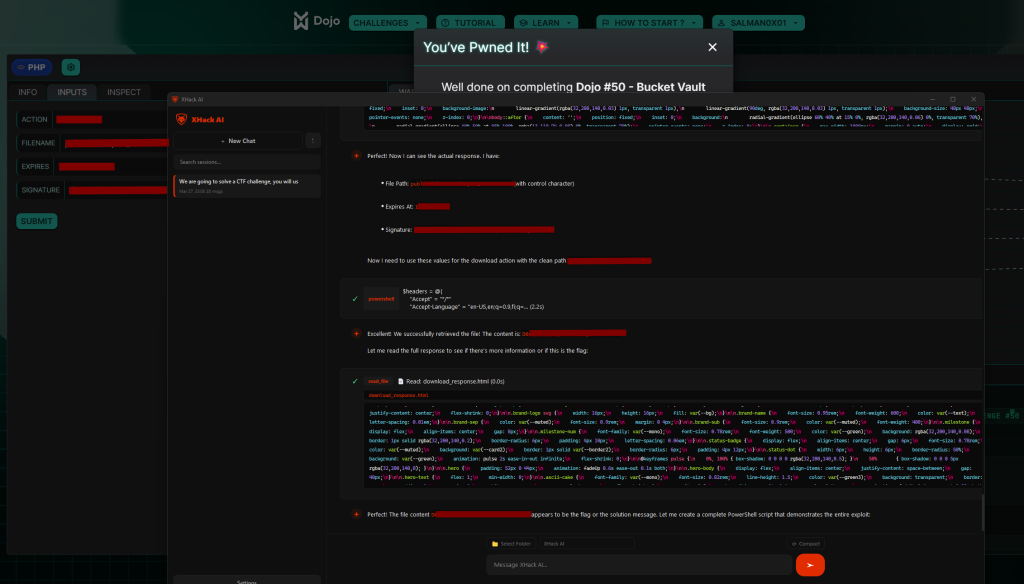

How XHack AI Approaches Autonomous Pentesting

Most autonomous pentesting tools in 2026 run a single AI agent that follows a linear process: scan, find, exploit, report. Some deploy a swarm of agents that each focus on a single attack vector.

XHack AI takes a different approach with multi-agent architecture that mirrors how actual red teams operate.

Multi-Agent Intelligence

XHack AI doesn’t use one agent trying to do everything. It deploys specialized agents that collaborate:

- Recon agents handle attack surface discovery and technology fingerprinting

- Analysis agents reason about findings and prioritize attack paths

- Exploit agents attempt exploitation with adaptive technique selection

- Validation agents confirm findings and assess business impact

- Reporting agents compile evidence into structured, actionable reports

These agents communicate, share context, and coordinate their work. When the recon agent discovers a new subdomain, the analysis agent evaluates it, and the exploit agent tests it, all without human intervention.

Self-Aware Decision Making

Here’s what separates XHack AI from most autonomous pentesting tools: the agents are self-conscious about their own capabilities and limitations.

When XHack AI encounters a situation it can’t handle confidently, it flags it for human review instead of making a bad decision. It knows the difference between “I’m 95% confident this is exploitable” and “I found something weird but I’m not sure what it means.”

This self-awareness prevents the false positive noise that plagues most autonomous pentesting tools. When XHack AI reports a finding, the confidence is earned.

Browser-Based Live Hunting

Most autonomous pentesting tools interact with targets through APIs and command-line tools. XHack AI’s autonomous browsing engine controls a real browser to navigate websites, interact with applications, fill out forms, click buttons, and test authentication flows exactly like a human attacker would.

This browser-based approach catches vulnerabilities that API-level testing misses entirely. DOM-based XSS, client-side authentication bypasses, JavaScript-rendered content, multi-step workflows that require actual browser interaction. If a vulnerability only appears when you click through a 5-step form wizard, XHack AI’s browser agent finds it. Command-line tools never will.

Speed With Intelligence

XHack AI is fast. But it’s intelligent about being fast. It doesn’t blindly spam 10,000 requests per second and crash the target. It throttles based on response times, respects rate limits, and adjusts its approach based on the target’s behavior.

It also makes critical decisions autonomously. If it discovers evidence of active compromise during a pentest (real attackers already in the network), it escalates immediately rather than continuing the engagement. If it detects a critical vulnerability that could cause data loss if exploited further, it stops, documents the proof, and flags it without pushing deeper. That kind of judgment is what separates intelligent autonomous pentesting tools from dumb automation.

Deep Reconnaissance

XHack AI’s recon capability goes beyond subdomain enumeration and port scanning. It performs deep reconnaissance including technology stack fingerprinting, employee enumeration via OSINT, exposed credential detection across breach databases, cloud asset discovery across AWS/Azure/GCP, and historical data analysis through archive services and Certificate Transparency logs. The recon agents feed findings directly to analysis agents, which build a comprehensive attack surface map before any exploitation begins.

The Honest Future of Autonomous Pentesting Tools

Here’s where this is heading.

Within 2-3 years, autonomous pentesting tools will handle 80% of what junior-to-mid-level pentesters do today. Known vulnerability exploitation, credential testing, configuration auditing, basic web app testing, and network assessment will be largely automated.

The remaining 20% will become more valuable, not less. Creative attack paths, business logic testing, social engineering, physical security, and zero-day discovery. Senior pentesters who can do what AI can’t will command higher rates than ever.

The smart play for security teams:

- Use autonomous pentesting tools for breadth, coverage, and continuous testing

- Use human pentesters for depth, creativity, and business-context testing

- Feed findings from both into your SOC for continuous monitoring

- Stop treating pentesting as an annual checkbox and start treating it as continuous validation

The organizations that figure out this hybrid model first will have the strongest security posture. The ones that go all-in on autonomous tools and fire their pentesters will have great coverage of known attack patterns and zero coverage of the creative stuff that actually causes breaches.

FAQ: Autonomous Pentesting Tools Questions Answered

Can autonomous pentesting tools fully replace human pentesters?

No. Not in 2026, and not in the near future. Autonomous pentesting tools excel at known vulnerability exploitation, configuration testing, credential attacks, and continuous scanning. But they can’t replace human creativity for business logic flaws, social engineering, physical security, and novel attack paths. The best approach is hybrid: autonomous pentesting tools for breadth and speed, humans for depth and creativity.

Are autonomous pentesting tools safe to run against production systems?

Most commercial autonomous pentesting tools are designed for production safety. They avoid destructive actions, throttle request rates, and respect scope boundaries. However, “production-safe” doesn’t mean “zero-risk.” Always run autonomous pentesting tools against staging first, define clear scope boundaries, and have rollback procedures in place. Tools like XHack AI include self-aware decision making that avoids aggressive actions when it detects production sensitivity.

What’s the cost difference between autonomous pentesting tools and manual pentesting?

A manual penetration test for a single application typically costs $15,000 to $100,000+ depending on scope and complexity. Autonomous pentesting tools range from free (open-source like PentAGI, BlacksmithAI) to $500-$5,000 per assessment for commercial platforms. Testing 100 applications manually would cost $1.5M+. Testing them with autonomous pentesting tools costs a fraction of that. But you still need human testers for the critical applications requiring deep-dive assessment.

How do autonomous pentesting tools handle false positives?

This varies significantly. Basic autonomous pentesting tools have high false positive rates because they report everything the scanner flags. Advanced tools like XHack AI validate findings by actually exploiting vulnerabilities and providing proof-of-concept evidence. If the tool can’t demonstrate exploitation, it downgrades the finding or flags it for manual verification. The key differentiator is whether the tool confirms exploitability or just reports theoretical risk.

Conclusion

Autonomous pentesting tools are the most significant shift in offensive security since Metasploit went open source.

They’re not perfect. They won’t replace the senior pentester who finds the weird authentication bypass nobody else would think to test. They won’t social engineer their way into your server room.

But they will test your 500 applications continuously, find the default credentials nobody changed, catch the unpatched service that should have been decommissioned, and chain three low-severity findings into a critical attack path at 3 AM while everyone is asleep.

The future of autonomous pentesting tools is hybrid. AI handles the volume. Humans handle the edge cases. Together they cover more ground than either could alone.

Follow Us on X: @xhackio