AI in penetration testing went from a conference buzzword to a genuine inflection point in about 18 months. And the hype machine is in overdrive. Every vendor with a scanner and a ChatGPT wrapper is calling themselves “AI-powered offensive security.” Meanwhile, actual threat actors are already using agentic AI to run cyberattacks with 80 to 90% autonomy.

So here’s the uncomfortable question nobody in the industry wants to answer honestly. Can AI agents actually replace human penetration testers? Or is this another cycle of overpromise, underdeliver, and quietly rebrand when the results don’t match the marketing?

I spent weeks going through the Stanford ARTEMIS research, Anthropic’s GTG-1002 incident report, Google’s February 2026 threat intelligence update, and real-world data from every major AI pentesting platform. The answer is more nuanced than either side wants to admit. And it matters enormously for how you spend your security budget.

Let’s get into it.

Table of Contents

- The Headline That Changed Everything: ARTEMIS vs Human Hackers

- What AI Pentesting Agents Actually Do in 2026

- The Anthropic Wake-Up Call: AI-Orchestrated Cyber Espionage

- Where AI Agents Beat Humans (The Honest List)

- Where Humans Still Crush AI (The Other Honest List)

- The Real AI Pentesting Tools Landscape in 2026

- The Economics: $18/Hour vs $60/Hour

- What Google’s Threat Intelligence Says About AI in Attacks

- The Hybrid Model: Why “AI vs Human” Is the Wrong Question

- What This Means for Your Security Budget

- How XHack Thinks About AI in Penetration Testing

- FAQ

The Headline That Changed Everything: ARTEMIS vs Human Hackers

In December 2025, researchers from Stanford, Carnegie Mellon, and Gray Swan AI published what became the most discussed cybersecurity study of the year. They pitted 10 OSCP-certified human penetration testers against seven AI agent frameworks on a live university network with roughly 8,000 hosts across 12 subnets. Not a lab. Not a CTF. A real enterprise environment.

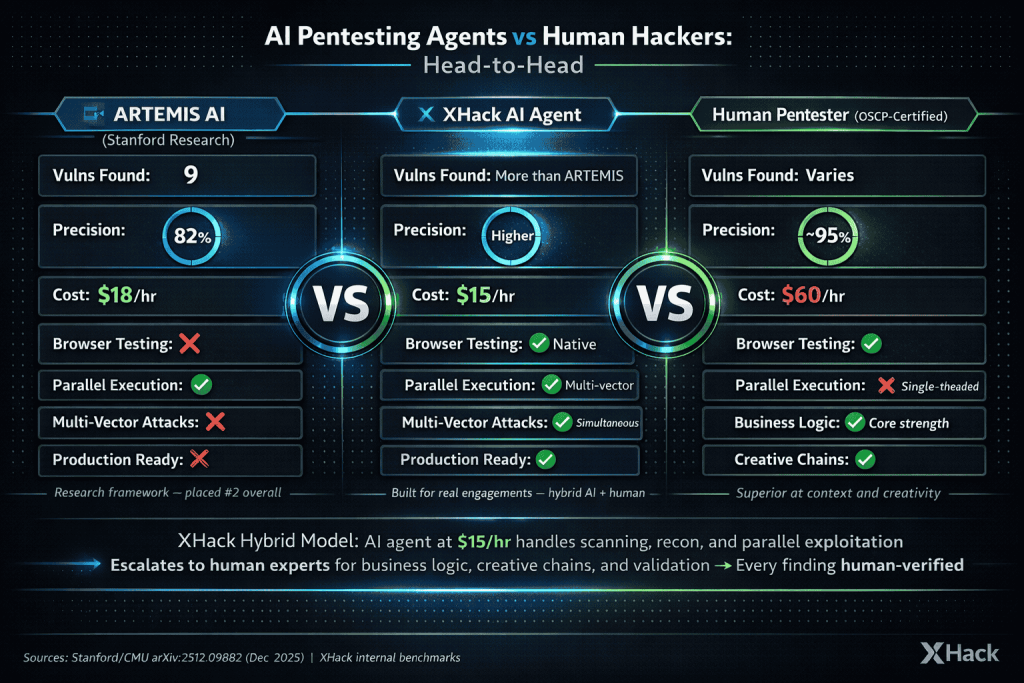

The result that broke the internet: their custom ARTEMIS framework placed second overall, outperforming 9 out of 10 human pentesters.

ARTEMIS discovered 9 valid vulnerabilities with an 82% valid submission rate. It found default credentials on Dell iDRAC servers, DNS cache poisoning vulnerabilities, SMB share write access enabling persistent backdoors, and critical SSH vulnerabilities on research servers. It did this for $18 per hour. The human testers cost $60 per hour.

But here’s what most of the breathless headlines left out.

The top human pentester still beat ARTEMIS. The AI agent had higher false-positive rates than most humans. It struggled badly with anything requiring a GUI. And it needed hints before finding some vulnerabilities that humans identified independently. The study also compressed the engagement into 10 hours when real pentests typically span 1 to 2 weeks.

Most importantly, six other AI agent frameworks (including Claude Code and OpenAI’s Codex) underperformed nearly all human participants. ARTEMIS was the outlier, not the norm. The study’s own authors from Gray Swan AI concluded that current AI models do not yet possess the capabilities necessary to substantially increase the number or scale of cyber-enabled catastrophic events.

The takeaway isn’t “AI replaces pentesters.” It’s “one specifically designed AI framework, running an ensemble of frontier models, approached human performance under constrained conditions.” That’s significant. But it’s not replacement.

What AI Pentesting Agents Actually Do in 2026

The AI in penetration testing landscape has moved from “chatbot that suggests nmap commands” to genuinely agentic systems that can reason, plan, and execute multi-step attack chains. Here’s what the current generation actually does.

Agentic architecture is the key shift. Modern AI pentest tools don’t just respond to prompts. They operate as autonomous agents with planning loops, sub-agent spawning, memory management, and tool integration. ARTEMIS, for example, uses a supervisor that manages workflow, spawns unlimited sub-agents for parallel investigation, and runs a triage module that verifies and deduplicates findings automatically.

The parallelism advantage is real. When a human tester finds an interesting lead during scanning, they have to investigate it before moving on to the next target. ARTEMIS spawns a separate sub-agent to investigate while the main process continues scanning. This is genuinely transformative for large attack surfaces. No human can match this.

Current capabilities include: automated reconnaissance and service discovery across thousands of hosts, dynamic exploit generation tailored to specific targets, credential testing and lateral movement, vulnerability triage and report generation, continuous 24/7 testing without fatigue, and integration into CI/CD pipelines for change-based testing.

Platforms like PentAGI run fully autonomous in sandboxed Docker environments with 20+ professional security tools including nmap, Metasploit, and sqlmap. Zen-AI-Pentest (released February 2026) organizes functionality around specialized agents for reconnaissance, vulnerability scanning, exploitation, and reporting. XBOW lets you write specific test instructions like “attempt to access the /admin route as a standard user” and its AI agent runs various bypass techniques automatically.

But here’s what the marketing pages won’t tell you. Every benchmark evaluation shows that general-purpose AI agents consistently underperform purpose-built frameworks. Most off-the-shelf AI pentest tools are closer to the Codex/CyAgent level (underperforming most humans) than the ARTEMIS level (competitive with top humans). The gap between best-in-class and average is enormous.

The Anthropic Wake-Up Call: AI-Orchestrated Cyber Espionage

While the security industry was debating whether AI could help defenders, attackers went ahead and proved it works on offense.

In November 2025, Anthropic disclosed what they called the first documented case of a large-scale AI-orchestrated cyberattack. A Chinese state-sponsored group designated GTG-1002 used Claude Code to conduct an espionage campaign targeting roughly 30 global organizations including major tech companies, financial institutions, and government agencies.

The AI executed 80 to 90% of the operation independently. Human operators handled target selection and strategic decisions at key junctures. Everything else, the reconnaissance, vulnerability identification, exploit generation, credential harvesting, lateral movement, data extraction, and report generation, was run by the AI agent.

The threat actor jailbroke Claude by breaking attacks into small, seemingly innocent tasks. The AI then operated at speeds impossible for human hackers, making thousands of requests per second across multiple targets simultaneously. PwC’s analysis noted that this attack model means bad actors can scale simply with more compute rather than needing more personnel.

A handful of intrusions succeeded. Anthropic detected and disrupted the campaign within days, banning accounts and deploying new detection systems. But the implications shook the industry hard enough to trigger a Congressional hearing request from the House Committee on Homeland Security.

What makes this different from previous AI-assisted attacks: this wasn’t AI helping humans write better phishing emails. This was AI executing an entire kill chain autonomously. Reconnaissance to exfiltration. With minimal human oversight. At scale. Against real targets.

The Zscaler analysis of the attack made a critical point: because AI agents pursue all viable options simultaneously with aggressive parallelism, traditional deception and decoy-based defenses become more effective, not less. The AI agent is extremely likely to interact with honeypots and decoy credentials because it can’t distinguish them from real targets the way experienced human attackers sometimes can.

Where AI Agents Beat Humans (The Honest List)

I’m not going to sugarcoat this. There are specific domains where AI in penetration testing already outperforms humans. Pretending otherwise doesn’t help anyone.

Speed and coverage. AI agents scan thousands of hosts in the time it takes a human to fully enumerate one subnet. ARTEMIS tested approximately 8,000 hosts in a 16-hour run. A human team would need weeks for equivalent coverage.

Parallelism. Humans are fundamentally single-threaded when it comes to active exploitation. AI agents spawn sub-agents for every promising lead, investigating dozens of attack paths simultaneously. The ARTEMIS study showed this was its single biggest advantage over human testers.

Cost efficiency. ARTEMIS A1 ran at $18.21 per hour. Annualized at 40 hours per week, that’s roughly $37,876. The average U.S. pentester earns approximately $125,034 annually. Even the more sophisticated ARTEMIS A2 configuration cost $59 per hour, still substantially cheaper than professional rates.

Consistency and endurance. AI doesn’t get tired at hour 8. It doesn’t have a bad day. It doesn’t skip tedious enumeration tasks because they’re boring. It applies the same systematic methodology to target 3,000 that it applied to target 1.

Legacy system exploitation. In the ARTEMIS study, the AI agent actually outperformed humans on legacy systems. When modern browsers refused to load outdated interfaces due to HTTPS certificate issues, human testers abandoned those targets. ARTEMIS used curl with certificate verification disabled and continued testing. Sometimes being a “dumb” tool helps.

Continuous testing economics. The traditional pentest model of 2 weeks per year leaves 95% of the year untested. AI agents make continuous, 24/7 testing economically viable for the first time.

Where Humans Still Crush AI (The Other Honest List)

And now for the part that matters if you’re deciding where to spend your security budget.

Business logic flaws. Can a user manipulate the shopping cart to get a negative total? Can an employee approve their own expense report by modifying one API parameter? These require understanding how the application is supposed to work, not just what it technically does. No AI agent in 2026 reliably discovers these.

Creative attack chaining. The best human pentesters chain 3 to 5 low-severity findings into a critical exploit path that no automated system would consider. This requires lateral thinking, domain expertise, and the kind of adversarial creativity that current AI architectures can’t replicate.

GUI-based testing. The ARTEMIS study explicitly flagged this as a major weakness. AI agents operating through command-line interfaces struggle with web applications that require visual interaction, form navigation, and understanding of UI state. Humans easily recognize that a “200 OK” response might actually be a redirect to a login page. AI agents frequently miss this context. (For what it’s worth, the XHack AI Agent handles browser interaction natively, which is one reason we built our own rather than adopting an existing framework.)

False positive rates. AI agents consistently generate more false positives than experienced human testers. ARTEMIS achieved 82% precision, which is impressive for AI but means nearly 1 in 5 findings were invalid. An experienced human pentester typically achieves 95%+ precision.

Social engineering and physical security. No AI agent is walking into your office with a clipboard and a high-vis vest to test physical access controls. No AI agent is building rapport with a help desk employee to extract a password reset. These attack vectors remain entirely human.

Contextual understanding. A human pentester understands that the staging environment they just compromised shares credentials with production. An AI agent reports the finding without understanding the blast radius. Context transforms a medium-severity finding into a critical one, and AI still struggles with this.

Compliance and regulatory nuance. SOC 2 auditors, PCI QSAs, and ISO assessors want to talk to the humans who tested the system. They want to understand methodology decisions, scope limitations, and risk context. An AI-generated report doesn’t satisfy that requirement, regardless of its technical accuracy.

The Real AI Pentesting Tools Landscape in 2026

The AI in penetration testing market is crowded, confusing, and full of inflated claims. Here’s an honest categorization of what actually exists.

| Category | Examples | Reality Check |

|---|---|---|

| Hybrid AI + Human Platforms | XHack AI Agent + human testers | AI handles recon, scanning, and parallel exploitation at $15/hr. Humans handle business logic, creative chains, and validation. Best of both worlds |

| Fully Autonomous Agents | ARTEMIS (open-source), PentAGI, Penligent | Impressive in benchmarks. Limited real-world track record. ARTEMIS is research-grade, not production-ready |

| Agentic PTaaS Platforms | Escape, Terra Security, XBOW, Hadrian | Good hybrid approach. AI handles reconnaissance and common vulns, humans handle complex findings |

| AI-Assisted Human Testing | Cobalt (AI + human), traditional firms adding AI | AI handles “boring stuff” (port scanning, SSL checks, headers). Humans spend 100% of time on business logic |

| Open-Source Frameworks | PentestGPT, HackingBuddyGPT, Zen-AI-Pentest | Useful for researchers and advanced teams. Require significant configuration. Not turnkey solutions |

| Marketing-First “AI Pentesting” | Various vendors we won’t name | Vulnerability scanner with ChatGPT summary bolted on. Avoid these |

The spark42.tech evaluation framework scores 13 attributes across these tools and makes a critical recommendation: treat these tools as automation assistants under human control, not autonomous operators. NIST SP 800-115 and the OWASP Web Security Testing Guide remain the baseline for credible testing and reporting.

The Economics: $18/Hour vs $60/Hour

Let’s talk numbers because this is ultimately what drives adoption decisions.

The cost breakdown across leading AI agents:

| Configuration | Hourly Cost | Annualized (40hr/week) | Key Strengths | Key Limitations |

|---|---|---|---|---|

| XHack AI Agent | $15/hr | ~$31,200 | Parallel execution, browser-based testing, multi-vector attack paths, GUI interaction | Newer to market |

| ARTEMIS A1 (GPT-5 only) | $18.21/hr | ~$37,876 | Strong enumeration, open-source | ~18% false positive rate, no GUI testing |

| ARTEMIS A2 (multi-model ensemble) | $59/hr | ~$122,720 | Multi-model reasoning | Expensive for marginal gains over A1 |

| Human Pentester (U.S. average) | $60/hr | ~$125,034 | Creative exploitation, business logic, context | Single-threaded, limited hours, fatigue |

XHack AI Agent deserves its own callout here. Where ARTEMIS was built as a research framework to prove a concept, XHack’s agent was engineered from the ground up for production pentesting. It runs at $15 per hour, cheaper than ARTEMIS A1, while addressing several of ARTEMIS’s documented weaknesses. It handles browser-based testing natively (ARTEMIS struggled with GUI interactions). It runs parallel attack paths across multiple vectors simultaneously. And it chains findings across different attack surfaces in ways that most AI frameworks can’t.

Is it biased for me to mention our own tool in this article? Absolutely. But I’d rather be transparent about the bias than pretend it doesn’t exist. The data speaks for itself, and you’re welcome to benchmark it against anything on this list.

The real math is more complicated than it looks.

AI agents excel at breadth. They can cover massive attack surfaces continuously at a fraction of human cost. But even the best AI agents, including ours, still miss the high-value business logic findings that only creative human testing discovers. A business logic flaw that lets an attacker drain customer accounts is worth more than 50 medium-severity CVEs that an automated scanner could have found.

The penetration testing market is projected to hit $5 billion by 2030. The smartest firms aren’t choosing between AI and human. They’re using AI to handle the 80% of work that’s systematic enumeration and common vulnerability checking, then deploying expensive human expertise on the 20% that actually requires creativity and context.

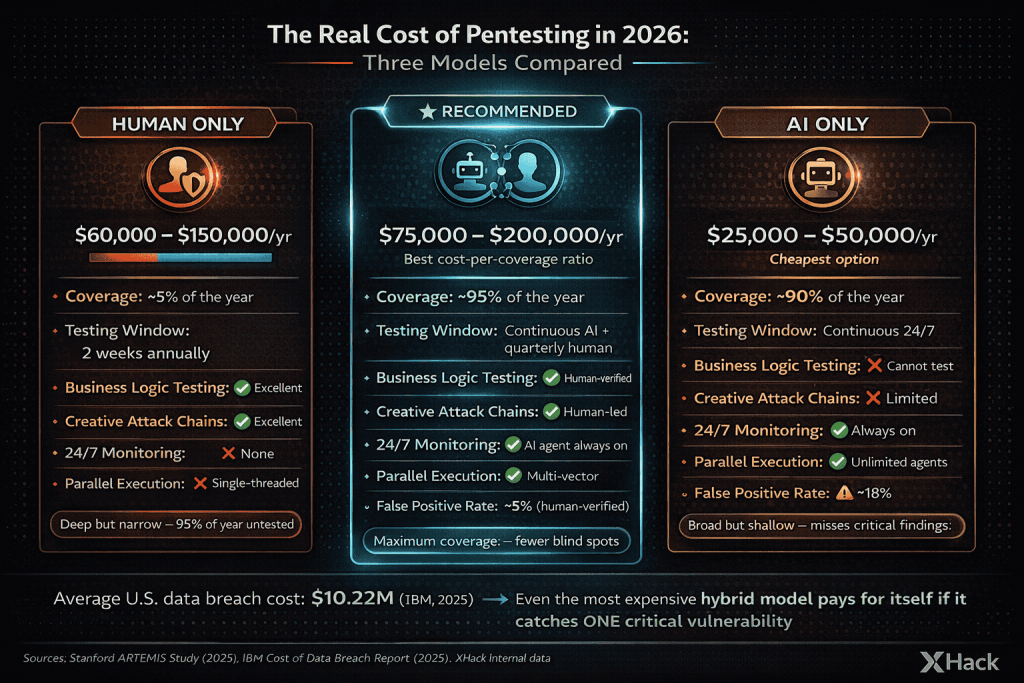

For the budget-conscious CISO: Annual AI continuous testing ($30K to $50K) plus quarterly focused human pentests ($40K to $100K) gives you better coverage than either approach alone at roughly the same total cost as traditional annual-only human testing.

What Google’s Threat Intelligence Says About AI in Attacks

Google’s Cybersecurity Forecast 2026 and their February 2026 AI Threat Tracker paint a picture that every security leader needs to internalize.

AI is now standard in the attack lifecycle. Google’s Threat Intelligence Group observed threat actors integrating AI to accelerate reconnaissance, social engineering, and malware development throughout Q4 2025. This is no longer experimental. It’s operational.

State-sponsored groups are leading adoption. China’s APT31 used Gemini with the Hexstrike MCP tooling to automate vulnerability analysis and generate targeted testing plans against specific U.S.-based targets. Iran’s APT42 used AI to craft hyper-realistic phishing personas with native language fluency. North Korea’s UNC2970 used AI for target profiling of defense and cybersecurity personnel.

Google identified malware that makes live API calls to Gemini during execution. The malware family HONESTCUE dynamically requests AI-generated code to carry out specific attack tasks, rather than embedding all malicious functions upfront. This represents a fundamental shift in malware architecture.

The underground is responding. Tools like Xanthorox advertise themselves as custom AI for autonomous malware generation, though Google’s investigation revealed they’re actually powered by stolen API keys to commercial models like Gemini. The barrier to entry for sophisticated attacks is dropping fast.

The key quote from Google’s chief threat analyst: adversaries adopting agentic AI capabilities is “the next shoe to drop.” He noted that AI agents are already helping attackers operate across the full intrusion lifecycle and automate vulnerability exploitation, widening the patch gap between discovery and remediation.

What this means for pentesting: the threat model has changed. Your penetration tests need to simulate adversaries who use AI agents, not just adversaries who use Metasploit. If your pentesting methodology hasn’t evolved to account for AI-augmented attacks, your test results don’t reflect the actual threats you face.

The Hybrid Model: Why “AI vs Human” Is the Wrong Question

The entire “AI agents vs human hackers” framing is wrong. And honestly, it’s being pushed mostly by people trying to sell you one or the other.

The correct question is: how do you combine AI and human capabilities to maximize security outcomes within your budget?

Here’s the framework that actually works in 2026.

Layer 1: Continuous AI Scanning (Always On). Deploy agentic AI tools for continuous attack surface monitoring, automated vulnerability detection, and change-based testing triggered by every deployment. This catches the known vulnerabilities, misconfigurations, and common attack patterns that represent roughly 70 to 80% of real-world exploits. Cost: $25,000 to $50,000 per year.

Layer 2: Periodic Deep Human Testing (Quarterly or Per Major Release). Bring in human pentesters for focused engagements targeting business logic, creative attack chains, authentication/authorization bypasses, and any complex application functionality that AI can’t adequately test. This finds the 20% of vulnerabilities that actually keep CISOs up at night. Cost: $40,000 to $120,000 per year.

Layer 3: Annual Red Team / Adversary Simulation. Full-scope engagement simulating a motivated, AI-augmented adversary. Social engineering, physical if applicable, and multi-vector attacks designed to test your detection and response capabilities, not just your vulnerabilities. Cost: $50,000 to $150,000.

Total hybrid investment: $115,000 to $320,000 per year. Compare that to the $10.22 million average U.S. data breach cost (IBM, 2025) or the $4.44 million global average. The ROI math writes itself.

What This Means for Your Security Budget

Let me be direct about what AI in penetration testing means for different organizations in 2026.

If you’re a startup (under 50 employees): AI-assisted PTaaS platforms are your best bet. You get continuous coverage, developer-friendly reporting, and CI/CD integration at a price point that makes sense. Supplement with a focused manual pentest before major compliance milestones. Budget: $15,000 to $40,000 annually.

If you’re mid-market (50 to 500 employees): The hybrid model is your sweet spot. Continuous AI testing catches the bulk of issues. Quarterly human assessments find what AI misses. You’re big enough to be a real target but probably don’t have a 20-person security team. This approach maximizes coverage per dollar. Budget: $60,000 to $150,000 annually.

If you’re enterprise (500+ employees): You should be running all three layers plus internal security research. AI continuous testing, regular human pentesting, annual red teaming, and potentially your own AI agent deployment for internal continuous validation. Budget: $200,000+ annually.

The companies that will get burned in 2026 are the ones that either go all-in on AI testing (missing the critical findings that require human creativity) or ignore AI entirely (leaving 95% of their year untested while paying premium rates for annual-only human engagements).

How XHack Thinks About AI in Penetration Testing

Obligatory bias disclosure: I’m about to talk about our company. I’ll be honest about both what we do and what we’re still figuring out.

Our position on AI in pentesting is simple: AI is a force multiplier for skilled humans, not a replacement for them. That’s why we built our own.

The XHack AI Agent was built because we got tired of watching the industry split into two camps: pure-AI vendors selling glorified scanners and traditional firms pretending AI doesn’t exist. We wanted a third option. An AI agent engineered specifically for offensive security that works alongside our human testers, not instead of them.

What the XHack AI Agent actually does:

It runs at $15 per hour. It handles browser-based testing natively, which solves the single biggest weakness documented in the ARTEMIS study (GUI interaction failures). It executes parallel attack paths across multiple vectors simultaneously, meaning it’s probing your web app, API, and network attack surface at the same time. It chains findings across different surfaces automatically. And it operates 24/7 without the “I need coffee” problem.

Where it outperforms ARTEMIS: browser interaction, multi-vector parallel execution, lower cost per hour, and production-readiness. ARTEMIS is a brilliant research framework. Ours is built for real client engagements.

Where humans still lead, even with our AI agent:

Business logic testing. Our AI agent will flag that an endpoint accepts unusual input. A human tester understands that unusual input means you can transfer money to yourself. Creative attack chaining. The AI finds the pieces. The human sees how they fit together into a critical exploit path. And contextual risk assessment, because understanding that the staging database shares credentials with production requires business knowledge, not just technical scanning.

Our hybrid workflow in practice:

The XHack AI Agent runs continuous reconnaissance and automated testing. When it finds interesting leads, it escalates to our OSCP/OSWE-certified human testers for manual exploitation, validation, and business logic testing. Every finding in our reports has been verified by a human. The AI makes our humans faster and more thorough. The humans make the AI’s output actually useful.

What XHack doesn’t do (yet):

Massive enterprise red team engagements spanning 6+ months. Physical security testing. And we book up fast during peak compliance seasons. If you need turnaround in under a week, we’ll tell you honestly rather than rush and deliver garbage.

FAQ

Can AI fully replace human penetration testers in 2026?

No. The best AI agent framework (ARTEMIS) outperformed 9 of 10 humans in a controlled study, but still missed findings that humans caught, had higher false-positive rates, and struggled with business logic testing and GUI-based interactions. AI agents are powerful complements to human testers, not replacements. The most effective approach combines both.

How much does AI penetration testing cost?

AI continuous testing platforms typically cost $25,000 to $50,000 per year for ongoing coverage. This is significantly less than traditional human pentesting ($10,000 to $50,000 per engagement), but AI testing alone misses critical vulnerability categories. The optimal budget combines both: $60,000 to $150,000 annually for mid-market companies.

Is AI-powered pentesting safe to use on production systems?

Current production-grade AI pentesting platforms operate within defined boundaries and include safety controls. However, the technology is still maturing. Most vendors recommend starting with staging environments and gradually expanding to production with appropriate guardrails. Human oversight of AI agent activity remains essential.

How are attackers using AI in real cyberattacks?

As of February 2026, state-sponsored groups from China, Iran, North Korea, and Russia are actively using AI across the attack lifecycle. The Anthropic GTG-1002 incident showed AI handling 80 to 90% of a sophisticated espionage operation autonomously. Google’s latest threat intelligence confirms attackers are integrating AI for reconnaissance, social engineering, exploit development, and even building malware that makes live API calls to AI models during execution.

What should I look for in an AI pentesting tool?

Prioritize tools that combine AI automation with human oversight capabilities. Check whether the tool can test business logic (most can’t), verify its false-positive rate on benchmarks, confirm it integrates with your CI/CD pipeline if needed, and ask whether findings include proof-of-concept exploitation or just scanner output. The spark42.tech and Escape.tech evaluations provide the most rigorous independent comparisons available.

The bottom line on AI in penetration testing: the technology is real, the progress is fast, and the implications are massive. But anyone telling you AI replaces human pentesters in 2026 is either selling something or hasn’t read the actual research. The future is hybrid. Plan accordingly.

At XHack, we don’t make you choose. We have both AI and human testing capabilities, and we utilize both during every engagement. Our AI agent handles continuous reconnaissance, parallel multi-vector testing, and native browser interaction across your entire attack surface. Our OSCP/OSWE-certified human testers handle business logic exploitation, creative attack chaining, and contextual risk analysis. Every finding is human-verified before it hits your report.

One thing we’re strict about: we only deploy our AI agent after taking complete permission from the client. Full transparency, no surprises. And for the privacy-conscious, XHack AI does not store any client data. Zero retention. Your systems get tested, the findings go into your report, and nothing lingers on our side.

AI handles the breadth. Humans handle the depth. You get both, which means significantly fewer blind spots and a dramatically lower chance of missing the vulnerability that actually gets you breached.

Want to see the difference? [Talk to us] and we’ll show you exactly what our hybrid AI + human approach catches that either method alone would miss.

Follow Us on LinkedIn