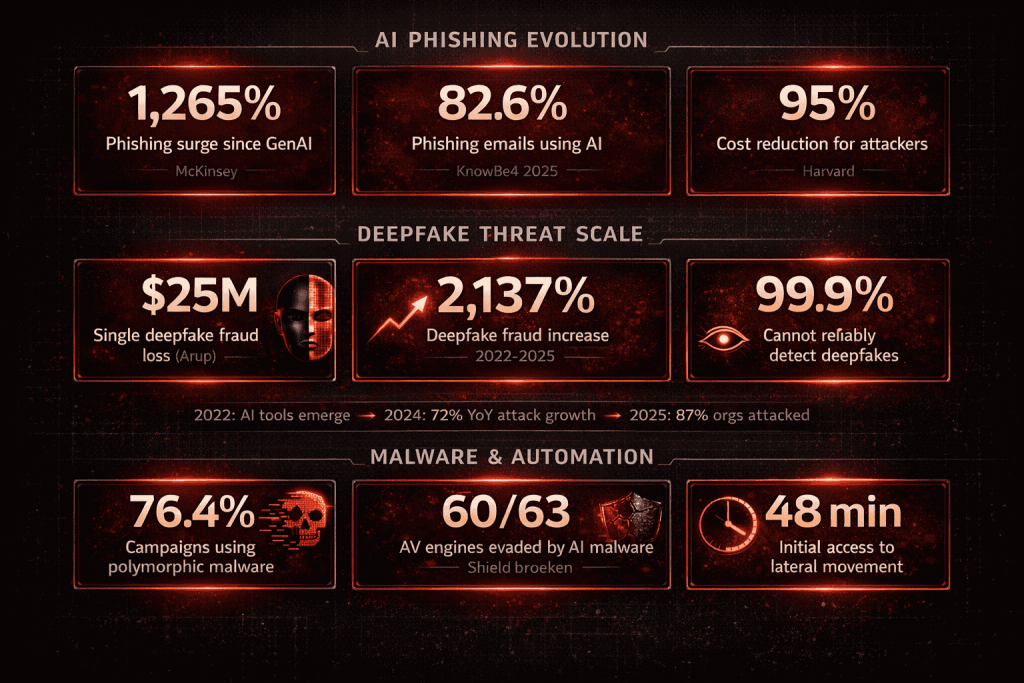

30-Second Summary: AI cyber threats grew 72% year over year, and 87% of global organizations faced AI-powered cyberattacks in the past 12 months. AI-generated phishing now accounts for 82.6% of all phishing emails, a 53.5% increase from the prior year. Phishing attacks surged 1,265% since generative AI platforms became widely available. A finance worker at engineering firm Arup transferred $25 million after attending a video call where every executive face and voice was an AI-generated deepfake.

Deepfake fraud rose 2,137% between 2022 and 2025. Polymorphic malware that reshapes itself every 15 seconds is now present in 76.4% of phishing campaigns. AI password-cracking tools bypass 81% of common passwords within a month. AI generates phishing campaigns 95% cheaper and 40% faster than manual methods. And 76% of organizations cannot match the speed of AI-driven attacks. This is XHack’s field guide to how attackers weaponize generative AI across every major threat category, with the defense strategies that actually work.

Generative AI didn’t just give defenders better tools. It gave attackers a force multiplier that changed the economics of cybercrime overnight.

Before generative AI, a convincing phishing campaign required a skilled social engineer who could write persuasive English, research targets manually, and craft individualized lures. That campaign cost time, required expertise, and scaled linearly with the number of targets.

After generative AI, that same campaign costs 95% less, executes 40% faster, produces grammatically perfect content in any language, and scales to millions of targets simultaneously. AI cyber threats don’t just make existing attacks better. They make attacks that were previously uneconomical suddenly profitable at massive scale.

The shift is measurable. AI-powered cyberattacks increased 72% year over year. 87% of organizations faced AI-powered attacks in the past year. The FBI logged hundreds of deepfake-based business email compromise scams in 2025 alone. And Deepfake-as-a-Service platforms are now commercially available to cybercriminals of any skill level.

At XHack, we test organizations against exactly these AI-enhanced attack vectors. This guide covers every major category of AI cyber threats: how each one works, the real incidents that demonstrate impact, the numbers that quantify the scale, and the defense strategies we recommend.

AI-Powered Phishing: The Numbers Are Staggering

Phishing was already the most common attack vector before AI. Generative AI turned it into something qualitatively different.

The scale shift. Phishing attacks increased 1,265% since the proliferation of generative AI platforms beginning in 2022. Credential phishing attempts jumped 703% in 2024 driven by AI-generated phishing kits. A 202% surge in phishing email messages occurred in the second half of 2024 alone. Financial losses from phishing hit $17.4 billion globally in 2024, a 45% year-over-year increase.

Why AI phishing is more effective. Traditional phishing had telltale signs: grammatical errors, generic greetings, implausible scenarios. AI-generated phishing eliminates all of these. KnowBe4’s 2025 analysis found that 82.6% of phishing emails now use AI, up 53.5% from the previous year. Harvard Business School research measured AI phishing at a 60% success rate, comparable to human-crafted campaigns but at massively increased scale and 95% lower cost.

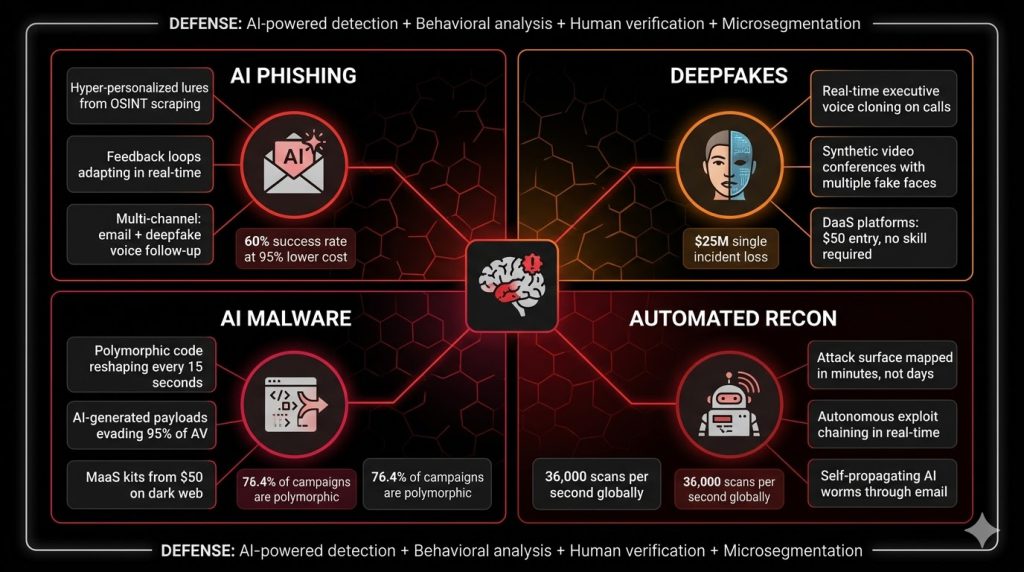

How it actually works. Attackers use LLMs to generate personalized phishing content at scale. The AI scrapes publicly available information about the target (LinkedIn profiles, company websites, social media posts, press releases), then generates emails that reference real projects, real colleagues, and real events. The result is a message that feels personally written because it was, by an AI that researched the target first.

Advanced AI phishing systems now incorporate feedback loops. When a particular email variant triggers spam filters, the AI automatically adjusts linguistic patterns, sender domains, and content structure within hours. A financial services company blocked an initial AI phishing wave targeting customer service representatives, only to face a modified version six hours later using completely different patterns.

The multi-channel evolution. AI cyber threats now combine email phishing with deepfake voice calls. A pharmaceutical company’s accounts payable team received a phishing email followed by a call using their CEO’s cloned voice referencing that email to authorize wire transfers. The combination of channels created urgency and authenticity that bypassed standard approval workflows.

XHack defense recommendation: Deploy AI-powered email security that detects AI-generated content patterns, not just known signatures. Implement behavioral analysis on email traffic. Run AI-realistic phishing simulations monthly, not quarterly. Train staff specifically on multi-channel attacks where email is followed by voice or video confirmation.

Deepfakes: From Novelty to Operational Attack Tool

Deepfake technology crossed the threshold from research curiosity to production-grade attack tool in 2025. Deepfake-as-a-Service platforms are now commercially available, making the technology accessible to cybercriminals of any skill level.

The numbers. Deepfakes now account for 6.5% of all fraud attacks, a 2,137% rise between 2022 and 2025. 85% of organizations specifically encountered deepfake attacks. 53% of financial professionals have experienced attempted deepfake scams. The AI voice cloning market is valued at $4.4 billion in 2026, projected to reach $25.6 billion by 2033. And 99.9% of people cannot reliably identify deepfakes.

The $25 million Arup incident. In February 2024, a finance worker at Arup, the multinational engineering firm, transferred $25 million after attending what appeared to be a legitimate video conference with the company’s CFO and senior leadership. Every face on the screen was real. Every voice matched perfectly. All of them were AI-generated deepfakes created using publicly available footage of the executives. The attackers cloned voices and faces, scheduled a convincing video call, and authorized wire transfers in real time.

Deepfake-as-a-Service. 2025 marked the commercialization of deepfake attack tools. DaaS platforms offer ready-to-use AI tools for voice cloning, video generation, image manipulation, and persona simulation. In Singapore, attackers used DaaS to impersonate executives and instruct employees to transfer millions to fraudulent accounts. The barrier to entry dropped from “nation-state capability” to “anyone with a credit card.”

Attack patterns we see in testing. Real-time voice clones of executives issuing payment instructions. Manipulated Zoom and Teams calls with synthetic faces and voices. Pre-recorded “emergency” videos that appear authentic to non-technical staff. Synthetic identity creation for account fraud and social engineering. These attacks bypass technical controls entirely and exploit authority, urgency, and trust.

The detection gap. Only 71% of people globally know what a deepfake is. Only 0.1% can consistently identify them. YouTube has the highest deepfake exposure, with 49% of surveyed users reporting encounters. The gap between deepfake sophistication and human detection capability is widening, not closing.

XHack defense recommendation: Implement out-of-band verification for any financial transaction or sensitive request initiated through video or voice, regardless of how authentic it appears. Deploy deepfake detection technology on video conferencing platforms. Establish code words or callback procedures for executive-initiated wire transfers. Train finance and leadership teams specifically on deepfake social engineering with realistic simulations.

AI-Generated Malware: Polymorphic Threats at Machine Speed

Generative AI fundamentally changed malware development. Attackers now use LLMs to write, obfuscate, and evolve malicious code faster than signature-based detection can keep up.

Polymorphic malware at scale. AI-generated polymorphic malware changes its identifiable features (file hash, code structure, behavioral signatures) every time it replicates. Advanced strains generate a new, unique version of themselves as frequently as every 15 seconds during an attack. Polymorphic tactics are now present in 76.4% of all phishing campaigns. Over 70% of major breaches involve some form of polymorphic malware.

The cost of entry collapsed. Malware-as-a-Service kits like BlackMamba and Black Hydra 2.0 are available for as little as $50. An OPSWAT researcher built a full malware chain in under two hours using publicly available AI tools. The AI-generated payload evaded detection by 60 out of 63 antivirus engines on VirusTotal. Behavioral analysis and sandboxing also failed to flag it. This wasn’t a nation-state operator. It was a motivated analyst using consumer-grade hardware and publicly accessible models.

How attackers use AI for malware development. LLMs generate initial exploit code based on vulnerability descriptions. AI tools obfuscate payloads to evade signature-based detection. Generative models fine-tuned on malware samples produce novel variants that match no known signatures. AI automates the testing cycle: generate payload, test against detection, adjust, repeat, all without human intervention.

AI-powered vulnerability discovery. 41% of zero-day vulnerabilities in 2025 were discovered through AI-assisted reverse engineering by attackers. AI agents now map entire attack surfaces in minutes rather than days, identifying vulnerabilities and testing exploitation techniques autonomously. Automated scanning activities rose 16.7% to reach 36,000 scans per second globally.

AI-assisted password cracking. AI password-cracking tools bypass 81% of common passwords within a month. In one benchmark, AI cracked 51% of 15.68 million common passwords in under one minute. Combined with the fact that 94% of passwords are reused or duplicated, AI-powered credential attacks achieve success rates that make brute force economically viable at scale.

XHack defense recommendation: Shift from signature-based detection to behavioral analysis and anomaly detection. Deploy EDR/XDR platforms that use ML-based behavioral models rather than static signatures. Implement application allowlisting where possible. Monitor for AI-generated code patterns in submitted files and scripts. Assume polymorphic evasion and design detection for behavior, not appearance.

AI-Powered Reconnaissance and Attack Automation

Beyond phishing, deepfakes, and malware, AI cyber threats extend to automating the entire attack lifecycle.

Automated attack surface mapping. AI agents scrape organizational and employee data across public sources, map network infrastructure, identify exposed services, and prioritize high-value targets, all without human direction. What took a human attacker days of manual reconnaissance now takes an AI agent minutes.

Autonomous exploit chaining. AI systems chain multiple vulnerabilities together, adapting strategies in real time based on defensive responses. If one exploitation path is blocked, the AI pivots to an alternative within seconds. This adaptive capability means that patching a single vulnerability no longer stops the attack if the AI has already mapped alternative routes.

Self-propagating AI attacks. Researchers designed proof-of-concept AI worms that spread through email systems: a malicious prompt in an email tricks an AI assistant into exfiltrating data AND forwarding the attack to other contacts. The worm self-propagates without human direction, creating chain reactions across connected systems.

The speed differential. CrowdStrike’s 2025 data shows attackers reaching lateral movement in 48 minutes after initial access. AI-enhanced attacks compress this timeline further. When defensive teams need 15 to 45 minutes to triage a single alert and the AI generates hundreds of simultaneous actions, the math favors the attacker. 76% of organizations report they cannot match AI attack speed with their current defensive capabilities.

XHack defense recommendation: Deploy AI-powered detection that operates at machine speed to match AI-powered attacks. Implement microsegmentation to contain lateral movement even when initial access succeeds (organizations with microsegmentation see 45% lower breach costs). Automate initial response actions through SOAR playbooks. Reduce the human decision points in the response chain for known threat patterns.

The AI Cyber Threats Defense Checklist

Email and phishing defense: AI-powered email security with behavioral analysis. Monthly AI-realistic phishing simulations. Multi-channel attack training (email plus voice/video). DMARC, SPF, and DKIM enforcement.

Deepfake defense: Out-of-band verification for financial transactions. Deepfake detection on video platforms. Code word procedures for executive-initiated requests. Targeted training for finance and leadership.

Malware defense: Behavioral EDR/XDR replacing signature-based antivirus. Application allowlisting on critical systems. AI-generated code pattern monitoring. Sandbox analysis with behavioral triggers.

Reconnaissance and automation defense: AI-powered threat detection at machine speed. Microsegmentation for lateral movement containment. SOAR-automated initial response. Continuous attack surface monitoring.

Organizational defense: Security awareness training updated for AI-generated content. Executive deepfake awareness program. Incident response plans updated for AI-speed attacks. Regular red team exercises incorporating AI-enhanced attack techniques.

Frequently Asked Questions

How much more effective are AI cyber threats compared to traditional attacks?

The data shows AI cyber threats are dramatically more effective at scale, though individual success rates are comparable to expert human attackers. AI-generated phishing achieves a 60% success rate, similar to expert human-crafted campaigns, but at 95% lower cost and 40% faster execution. The real advantage is scale and economics: AI generates millions of personalized attacks simultaneously, while human attackers are limited by time and effort. Deepfake attacks exploit a detection gap where 99.9% of people cannot reliably identify synthetic media. AI-generated polymorphic malware evaded 60 out of 63 antivirus engines in a documented test. The combination of comparable effectiveness, dramatically lower cost, and unlimited scale is what makes AI cyber threats fundamentally different from their predecessors.

Can AI be used for defense as effectively as it’s used for attack?

Yes, and it’s already happening. Organizations deploying AI-powered security automation save an average of $2.2 million per breach compared to those without it (IBM 2025). AI-driven threat detection identifies anomalies in under one minute compared to 15 to 45 minutes for manual triage. The global AI cybersecurity market is projected to grow from $29.64 billion in 2025 to $146.52 billion by 2034. The challenge is that defensive AI must be correct every time, while offensive AI only needs to succeed once. This asymmetry means AI defense must be layered: AI-powered detection, AI-augmented response, and AI-informed prevention all working together.

What should organizations prioritize first when defending against AI cyber threats?

Start with the threats that are already hitting the most organizations at the highest volume: AI-powered phishing and deepfake social engineering. Deploy AI-capable email security that detects linguistic patterns characteristic of AI-generated content. Implement out-of-band verification for financial transactions. Update security awareness training to cover AI-generated phishing, deepfake voice calls, and multi-channel attacks. These three steps address the attack vectors that account for the majority of AI-enhanced incidents and the highest financial losses. Then layer in behavioral endpoint detection for polymorphic malware and microsegmentation for lateral movement containment.

AI cyber threats changed the economics of cybercrime. The defense has to change with it. XHack tests organizations against every AI-enhanced attack vector covered in this guide: AI-generated phishing campaigns, deepfake social engineering, polymorphic malware evasion, and AI-automated reconnaissance. Our XHack AI platform provides autonomous penetration testing, real-time threat intelligence, and malware analysis that matches AI-speed attacks with AI-speed detection. If your defenses were built for human-speed attackers, they’re already outdated.

Follow Us on XHack LinkedIn and XHack Twitter